Anthropic Unveils Claude Code Auto Mode: Autonomous Coding with Human Oversight Gates

Anthropic today announced the launch of auto mode for its Claude Code system, enabling multi-step software development workflows with significantly reduced manual intervention while embedding robust safety checkpoints. The feature is now available, promising to streamline coding tasks without sacrificing control.

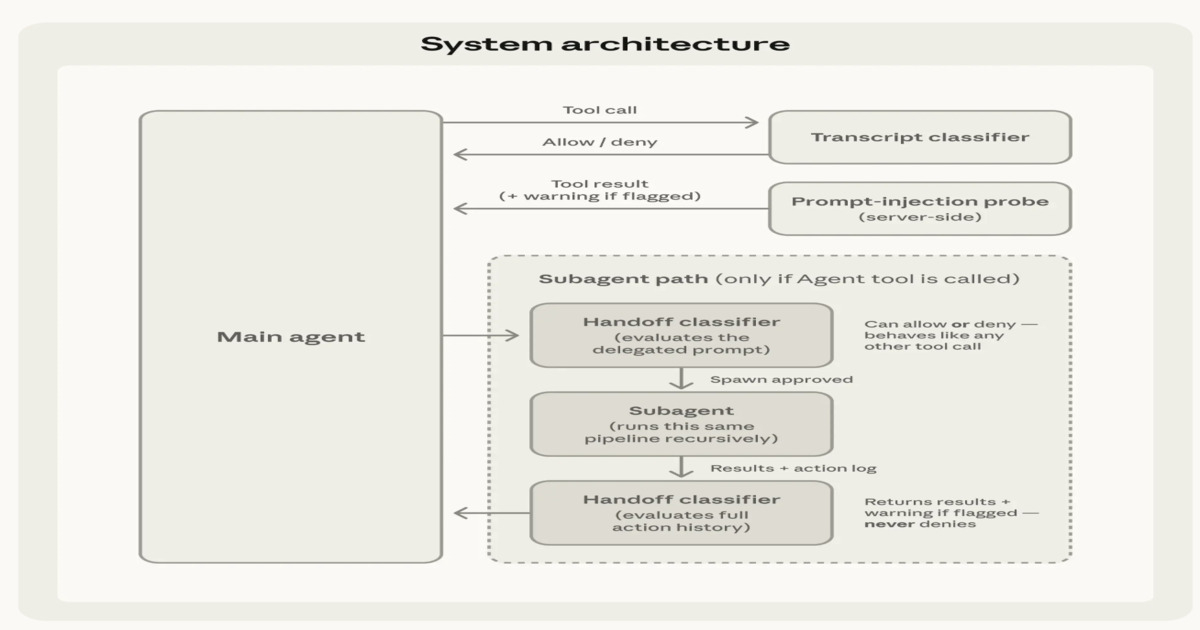

Auto mode combines automated execution with layered safety mechanisms, including input filtering, action evaluation, and a two-stage classification process. Human approval gates remain in place for sensitive operations, ensuring that critical decisions are not left entirely to the AI.

Background

Claude Code, Anthropic's AI-powered coding assistant, has been evolving to handle more complex tasks. The introduction of auto mode marks a shift from single-command assistance to autonomous multi-step workflows.

Safety has been a core concern. Anthropic has previously implemented guardrails for its Claude models, and auto mode extends these principles to coding environments. The two-stage classification helps distinguish between routine and high-risk actions.

What This Means

For developers, auto mode could dramatically speed up repetitive coding tasks such as debugging, refactoring, or testing. However, the human approval gates provide a necessary safety net, preventing unintended changes to production code.

Industry analysts see this as a balancing act. 'This is a significant step toward practical AI-assisted coding, but the safety layers show Anthropic is aware of the risks,' said Dr. Emily Zhang, AI safety researcher at Stanford. 'The real test will be how developers use these gates.'

/presentations/game-vr-flat-screens/en/smallimage/thumbnail-1775637585504.jpg)

Anthropic's product lead, James Carter, added: 'Auto mode is designed to augment, not replace, the developer. The approval checkpoints ensure that humans stay in the loop for operations that matter most.'

The feature includes input filtering to block malicious or malformed commands, and action evaluation assesses each step before execution. Sensitive actions—such as file deletion or network access—require explicit human approval.

Early adopters report that auto mode effectively handles routine code generation and testing, but caution that complex architectural decisions still need human oversight. As one beta tester noted, 'It's like having a very fast junior developer—you still need to review the work.'

Anthropic plans to gather feedback and refine the safety layers over time. The company emphasizes that auto mode is optional and can be disabled entirely for users who prefer manual control.

Related Articles

- Culture, Not Code, Blamed for Failed AI Deployments as $37B in Spending Falls Short of Expectations

- Unlocking Enterprise Efficiency: AI Agents for IT Operations

- Swift Development Reaches New Horizons: IDE Ecosystem Expands

- The Hidden Cost of Cloud-Based AI: Speed vs. Sustainability

- How to Spot the Differences in Samsung Galaxy Z Fold 8 'Wide' in Leaked Dummy Photos

- Apple’s Q2 2026 Earnings: John Ternus Steps Into the Spotlight

- 10 Essential Features of the New Python Environments Extension for VS Code

- May MacBook Pro Discounts: Everything You Need to Know About M5 Pro and M5 Max Deals