Codex Remote Control: Finally Coming to ChatGPT Mobile?

For months, developers using OpenAI's Codex have been clamoring for a feature that would let them take their coding assistant on the go. While competing tools like Anthropic's Claude already offer remote session control from a smartphone, Codex users have had to rely on makeshift workarounds. Now, signs point to OpenAI finally answering those calls. New text strings discovered in the Android app suggest native mobile control of Codex sessions is in the works—a move that could reshape how developers interact with their desktop workflows. This Q&A breaks down what's coming, why it matters, and what developers can expect.

What exactly is the new Codex feature being developed?

According to recent code teardowns of the ChatGPT Android app, OpenAI is building a feature that will allow users to remotely control their Codex sessions from a phone. Instead of being tethered to a desktop, developers will be able to start, pause, or interact with coding tasks on their PC via the ChatGPT mobile interface. This essentially mirrors what Anthropic's Claude already offers—a seamless bridge between desktop agent and handheld device. The feature is not yet live, but the presence of specific user interface strings in the app indicates active development. Once released, it could eliminate the need for third-party hacks that some users have cobbled together to simulate remote access. The upgrade addresses a top community request: being able to monitor and guide AI-assisted coding while away from the main machine.

Why have Codex users been demanding this feature?

The demand stems from a fundamental workflow gap. Developers often need to check progress, tweak prompts, or debug while not sitting at their workstation—maybe they're commuting, in a meeting, or taking a break. With Claude, developers can simply pull out their phone and interact with the agent. Codex, on the other hand, locked users to the desktop session. Over the past year, complaints on GitHub, Reddit, and developer forums grew louder. Users described manually setting up remote desktop apps or using terminal scripts to simulate remote control, but these hacks were unreliable and insecure. The native option would offer a polished, integrated experience. It's a quality-of-life improvement that aligns with the mobile-first nature of modern work. For a tool that's meant to boost productivity, being stuck at a desk felt like a limitation many were eager to see removed.

How does the new feature compare to what Anthropic's Claude offers?

Anthropic's Claude has long provided a remote control capability through its mobile app, allowing users to initiate or manage coding tasks on their PC from a phone. That feature has been a strong selling point for Claude among developers who value flexibility. The upcoming Codex upgrade aims to match that functionality. While Claude's implementation is already polished, OpenAI appears to be integrating the feature directly into the ChatGPT ecosystem, which could mean tighter integration with the assistant's broader capabilities. For example, Codex users might be able to use voice commands via ChatGPT to control their coding session—a possibility not yet offered by Claude. The core use case is identical: start or stop code generation, review output, and issue new instructions without touching the desktop. The competition is heating up, and this move by OpenAI signals that mobile freedom is now a baseline expectation for AI coding tools.

What evidence points to OpenAI working on this feature?

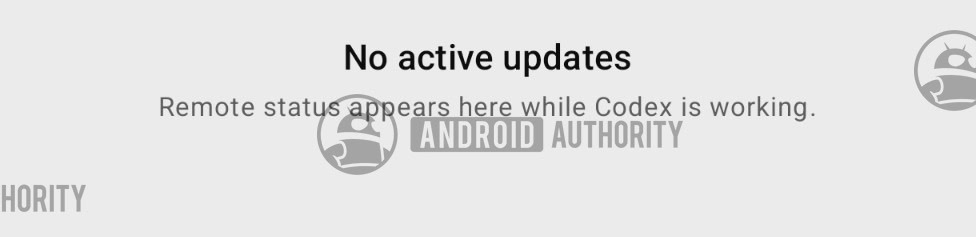

The strongest evidence comes from an app teardown of the ChatGPT Android application. Developers and analysts discovered new text strings that explicitly reference remote session control—phrases like “start remote session” and “connect to desktop agent.” These strings are hidden in the app's code and not yet visible to users, but their presence strongly indicates active engineering work. Additionally, the strings are placed in a section of the codebase associated with Codex, not general ChatGPT features. This suggests the feature is purpose-built for coding workflows. OpenAI has not made an official announcement, but internal builds often contain such placeholder text before public release. The timing aligns with months of user feedback, making this a credible sneak peek at what's next. Expect a beta rollout in the coming months, followed by wider availability.

When can developers expect to see the mobile Codex control feature?

There is no official release date yet. Based on typical development cycles for OpenAI features—and the fact that text strings are now appearing in the Android app—a beta version could arrive within the next one to two quarters. The company often tests major upgrades first with ChatGPT Plus subscribers before a broader launch. Developers should keep an eye on the ChatGPT app update logs and the official OpenAI blog for announcements. It's also possible the feature might debut simultaneously on iOS and Android, given that both platforms have similar demand. While waiting, users can continue using the existing desktop Codex interface or third-party hacks, but the native mobile control will likely be more stable and secure. For now, the best strategy is to stay subscribed to updates from OpenAI and the developer community forums where leaks often appear first.

How will this remote control change the way developers use Codex?

If implemented well, remote control could transform Codex from a desk-bound assistant into an always-on coding partner. Developers could start a complex refactoring task before leaving the office, monitor it on the train, and tweak instructions from the couch at home. It enables async work: one could ask Codex to generate a test suite or find bugs overnight and review results from a phone in the morning. Team collaboration could also improve, as a manager might hop into a junior developer's Codex session remotely to offer guidance. The flexibility reduces context-switching—no more rushing back to the desk to fix a stalled task. However, it also raises questions about security and session management. OpenAI will need to ensure that remote control is encrypted and that sessions can be locked or expired. Overall, it's a feature that makes AI-assisted coding more adaptable to real-world work patterns.

What are the potential challenges or drawbacks of mobile Codex control?

While the feature is exciting, it's not without potential pitfalls. The main concerns revolve around security and usability. Remote control over a coding agent means transmitting prompts and code snippets over the internet—any vulnerability could expose sensitive proprietary code. OpenAI will need robust encryption and authentication, possibly two-factor verification for each remote connection. Another challenge is the small screen real estate of a phone; reviewing long output or editing complex scripts on a mobile interface could be frustrating. Voice commands may help, but they introduce accuracy issues in noisy environments. Additionally, if the desktop session is left unattended, there's a risk of unintended actions if the mobile command is misinterpreted. Users currently relying on hacks may find the native version solves some problems but introduces new learning curves. Nevertheless, the benefits likely outweigh these drawbacks, provided OpenAI invests in thoughtful UX design.

Related Articles

- GPT-5.5 Goes Live on Microsoft Foundry: Enterprise AI Reaches New Frontier

- Docker’s AI Agent Fleet: How We Built a Virtual Team to Ship Faster

- OpenAI’s Future at Stake: Inside the Musk-Altman Courtroom Clash

- How Cloudflare Engineered High-Performance Infrastructure for Large Language Models

- 10 Key Insights: How AI Diffusion Models Are Revolutionizing Drug Design

- MIT's SEAL Framework Lets AI Models Rewrite Their Own Code, Marking Leap Toward Self-Improving Systems

- 10 Things You Need to Know About Gemma 4 on Docker Hub

- OpenAI Unveils GPT-5-Powered Speech Models for Real-Time Interaction