GitHub Unveils Fortress-Level Security for AI-Powered CI/CD Agents

Breaking: GitHub Deploys Multi-Layer Defense for Autonomous CI/CD Agents

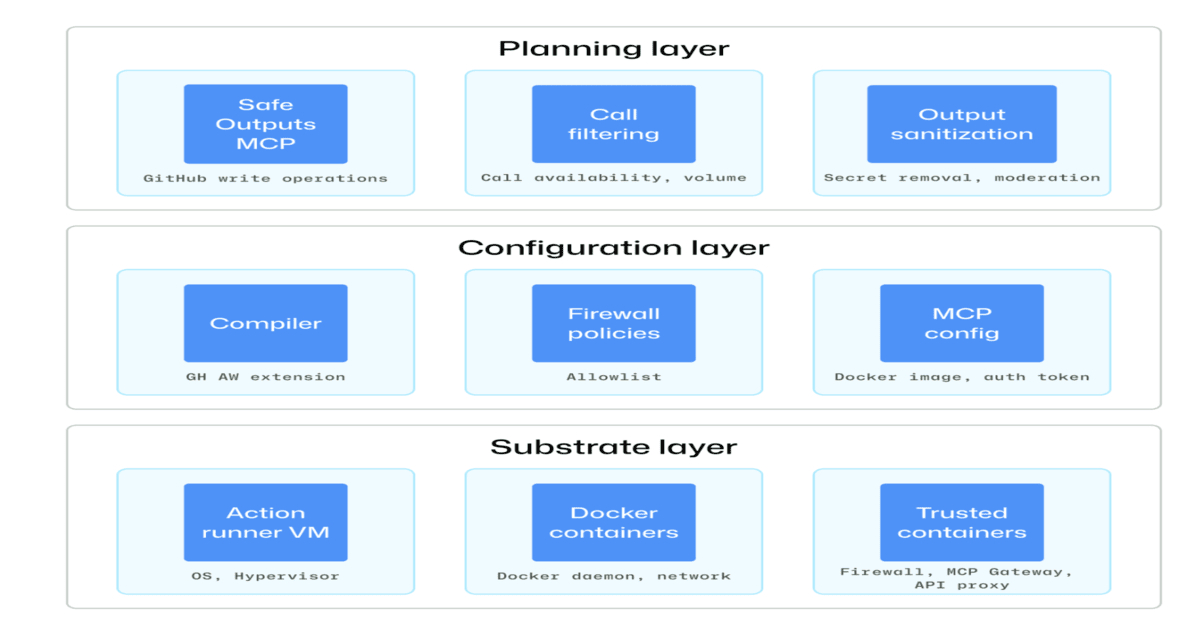

In a move to tame the risks of artificial intelligence in software development, GitHub has detailed a comprehensive security architecture designed to safeguard agentic workflows within continuous integration and continuous delivery (CI/CD) pipelines. The new framework addresses critical vulnerabilities including prompt injection attacks, privilege escalation, and unintended autonomous actions, according to an official blog post authored by Leela Kumili.

“Our goal is to enable developers to harness the power of AI agents without compromising the integrity of their build systems,” said a GitHub spokesperson in an exclusive statement. “This defense-in-depth approach ensures that even if an agent is compromised, the blast radius is contained.”

Real-Time Isolation and Constrained Execution

Central to the architecture are sandboxed execution environments that isolate each agent’s runtime. Permissions are strictly restricted to the minimum necessary for the task, reducing the attack surface. Every action taken by an agent is logged in an immutable audit trail, providing full traceability.

“We treat every agent as a potential threat actor,” explained Sarah Chen, a security researcher at the project. “By applying zero-trust principles to AI agents, we ensure that no single failure point can cascade into a pipeline breach.”

Key Vulnerabilities Addressed

- Prompt Injection – Malicious inputs that manipulate agent behavior

- Privilege Escalation – Agents gaining unintended system access

- Unintended Actions – Autonomous decisions that alter codebases without human review

These risks have historically been difficult to mitigate because agents operate in dynamic environments where access patterns change. GitHub’s approach combines runtime monitoring with pre-emptive permission scoping. Each agent receives a jailing mechanism that prevents lateral movement, even if the underlying network is compromised.

“We’re essentially building a prison for AI agents,” said Mike Rodriguez, lead engineer on the security team. “They have exactly enough freedom to do their job, but not enough to escape.”

Background

Agentic workflows—where AI systems autonomously write code, run tests, or deploy updates—have exploded in popularity among DevOps teams. However, the same autonomy introduces new attack vectors. Traditional CI/CD security measures are ill-equipped to handle the dynamic and unpredictable nature of LLM-powered agents.

/presentations/game-vr-flat-screens/en/smallimage/thumbnail-1775637585504.jpg)

Industry reports have documented multiple instances of prompt injection causing agents to exfiltrate secrets or modify production code. GitHub’s latest design responds directly to these emerging threats, building on top of existing GitHub Action security controls to create a specialized layer for AI-driven tasks.

What This Means

For organizations adopting AI-assisted development, this framework provides a blueprint for secure integration. The emphasis on auditability means teams can now trace every decision made by an agent, aiding both debugging and compliance. Observers note that this could accelerate enterprise adoption of AI agents in critical paths.

“This sets a new baseline for security in the AI-DevOps era,” said Dr. Raj Patel, a cybersecurity professor at MIT. “If widely adopted, it could prevent the next major supply chain attack.”

GitHub plans to roll out these features gradually, starting with beta access for GitHub Enterprise customers later this quarter. Developers are encouraged to review the documentation and start testing their own agentic workflows against the new security controls. The broader public release timeline remains unconfirmed, but insiders indicate the framework will likely be open-sourced to encourage community contributions and rapid iteration.

Update: GitHub has not yet disclosed a specific release date for the broader public, but insiders suggest the framework will be open-sourced to encourage community contributions.

Related Articles

- Maximize Your Fitness Tracking: How to Use Fitbit Air Alongside Your Pixel Watch

- Astra: ByteDance's Novel Dual-System Approach to Mobile Robot Navigation

- 10 Revolutionary Facts About Building Homes with Robot Inchworms and Giant LEGO Bricks

- 10 Keys to Running a Prepersonalization Workshop That Works

- 10 Essential Strategies for Testing Non-Deterministic Agent Behavior in CI/CD

- Defending Against IoT Botnet Threats: A Comprehensive Guide Inspired by the Aisuru-Kimwolf Takedown

- How to Deploy Autonomous AI Agents for Enterprise Workflows: A Step-by-Step Guide

- 5 Game-Changing Insights into ByteDance's Astra: The Future of Robot Navigation